Meet OmniXAI

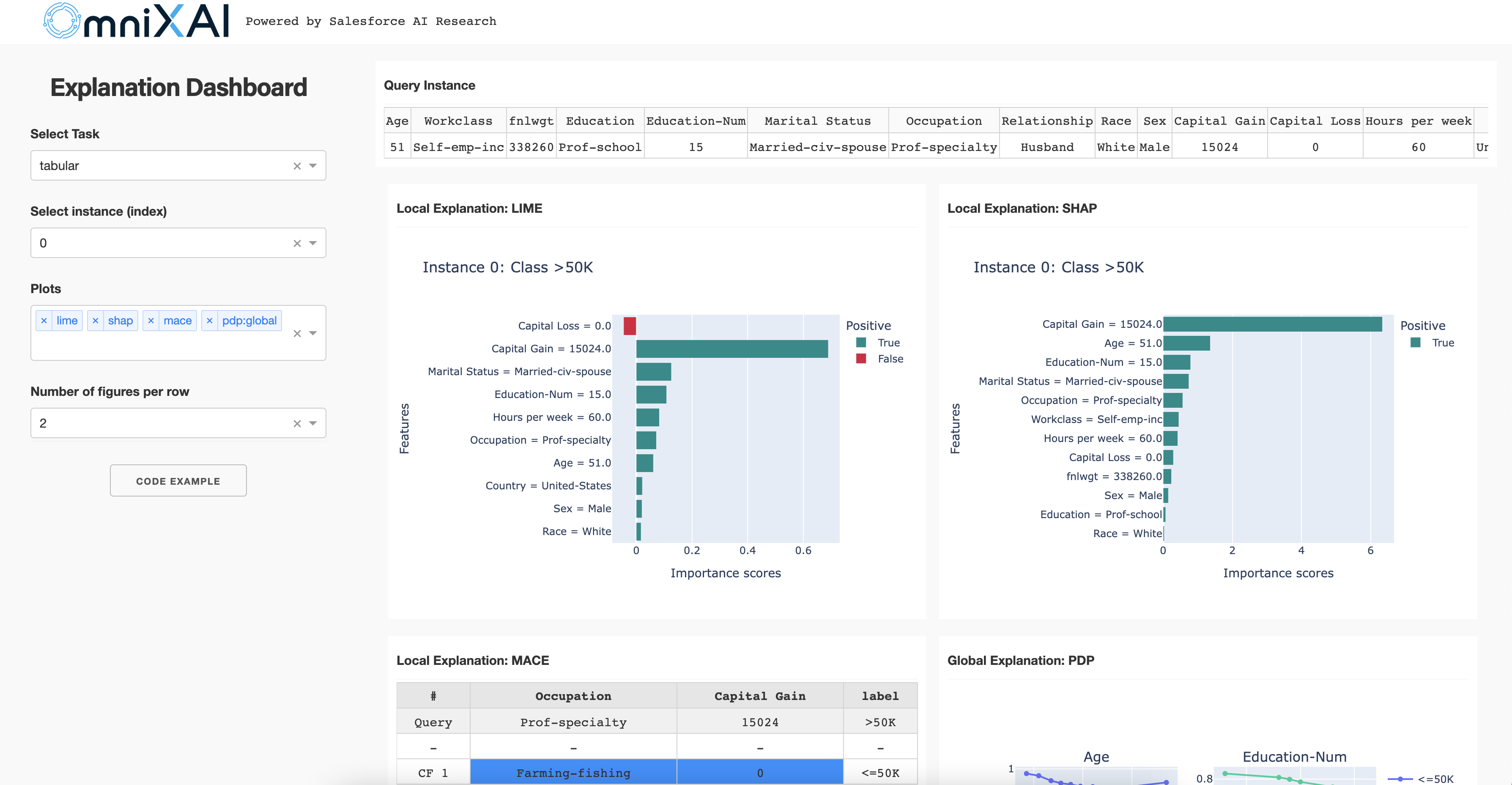

Omni eXplainable AI (OmniXAI) is an open-source Python library for explainable AI, offering omni-way explainable AI and interpretable machine learning capabilities to address many pain points in explaining decisions made by AI models in practice. OmniXAI aims to be a one-stop comprehensive library that makes explainable AI easy for data scientists, ML researchers, and practitioners who need explanations for various types of data and models at different stages of ML process. OmniXAI includes a rich family of explanation methods and provides you with an easy-to-use unified interface to generate the explanations for your applications by only writing a few lines of codes. OmniXAI also offers a dashboard for visualizing explanations to obtain more insights into model decisions.